Course Feedback Extension

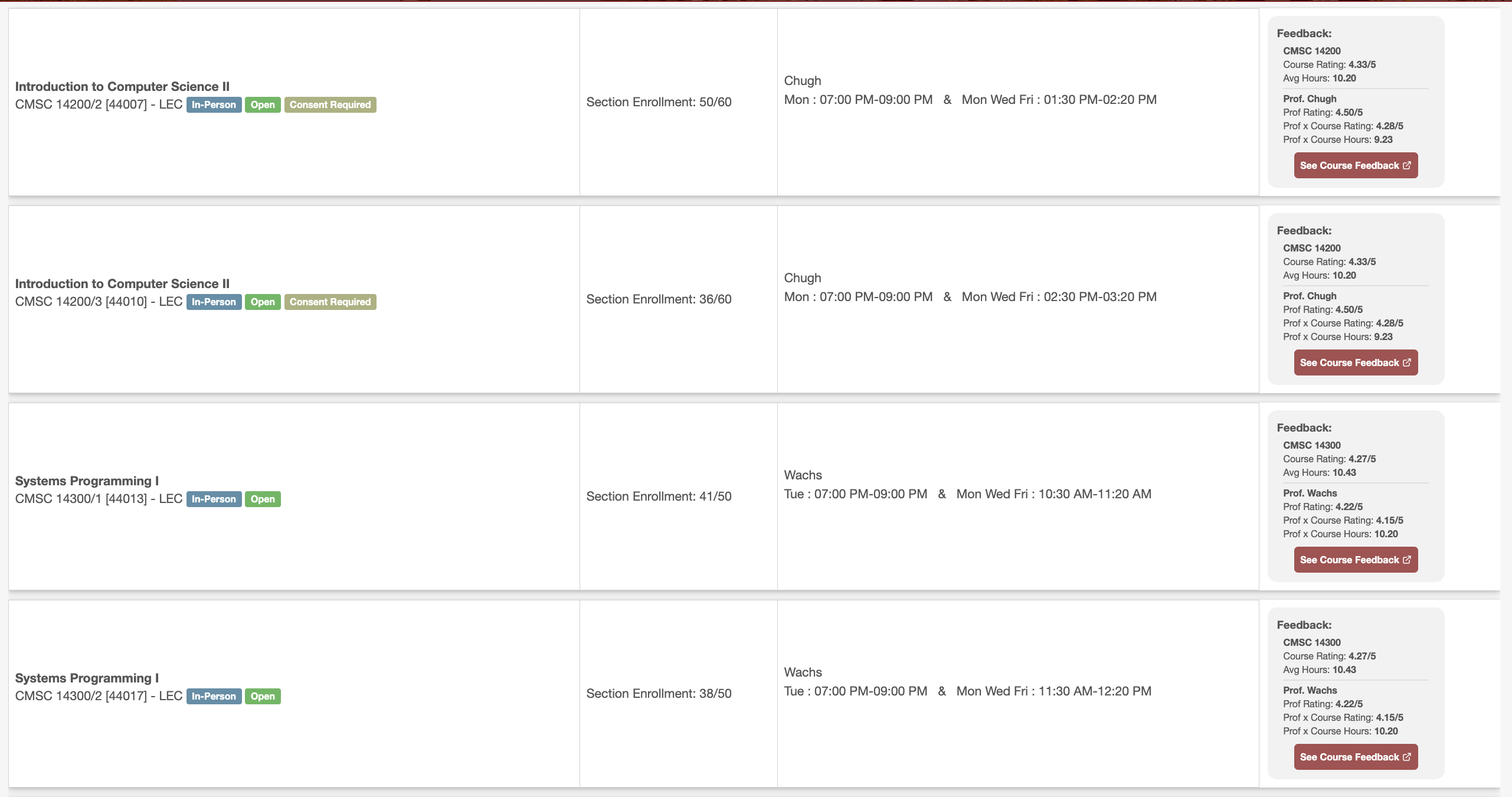

The UChicago course feedback extension shows course feedback data on the course search page, saving students hours throughout their time at UChicago hopping between the course search and course feedback database.

The Problem

Each quarter at UChicago, students participate in a pre-registration and add/drop period where they have a week to sort through classes that suit requirements and their desires to create a rewarding and enjoyable quarter.

A big part of this involves searching for an analyzing course feedback data. While this should be straightforward, it isn’t. UChicago stores these data in two separate databases and comparing courses involves hopping between these databases, making searches that take more time than you’d like, and then managing multiple tabs for each course.

Then, even when you look at the course feedback data, it’s presented with a lack of clarity and far too much fluff to easily make sense of it. There’s really only 3 things you need to know for a course: 1. how good is the course, 2. how good is the professor, and 3. how many hours will it take every week?

Each quarter, this takes about 30 minutes. When you look at the scale of your 12 quarters, however, this becomes hours of wasted time.

The Solution

I built a tool that stores and analyzes course feedback data and pulls ou the most important insights. It then will present these data directly on the course search page allowing you to quickly make comparisons between courses.

Importantly, it immediately gives you the trifecta of data you want (see above) and also allows you to directly search for the course feedback pdfs if you want more details.

The technical details

This was the first project I built by relying heavily on an LLM to code it. This was before the days of Cursor and Claude Code, so it was a lot of copy and pasting.

The frontend uses simple Javascript to detect mutations, pull course information necessary to get the data, and then overlaying the data. Shoutout to Ron Kiehn for building a nice frontend. He’s a really cool guy.

On the backend, I have a sqlite3 database that stores all the information which is hosted on PythonAnywhere. I primarily used Selenium and BS4 to scrape the copious amounts of data on the courses. The first time I was building this, I would caffeinate my terminal to run it overnight - the scraping took 10+ hours.

Now, I’ve got it down to taking about an hour, and I’m happy so share that I’m handing the project off to a young UChicago lad - Matt Lin - to keep it rolling and build it out for the next generation of UChicagoans.